Vibe Coding vs. Agentic Engineering: Why Secure Software Still Requires Engineers

Secure systems require more than prompt engineering.

Introduction

The software industry is moving through a major transformation right now. AI-assisted development tools are rapidly becoming part of everyday engineering workflows, and for good reason. When used correctly, these tools can dramatically accelerate development, reduce repetitive work, improve productivity, and help engineering teams move faster than ever before.

However, many organizations are beginning to discover an uncomfortable truth. Getting software to function is no longer the hard part. Building software that is secure, maintainable, operationally resilient, and architecturally sound still is.

Over the last year, I have watched more and more teams fall into what has come to be known as vibe coding. In practice, this means engineers continuously prompting AI systems until an application appears functional without fully understanding the underlying architecture, security implications, operational behavior, or long-term maintainability of the generated code.

In contrast, agentic engineering is fundamentally different. Agentic engineering is not anti-AI, nor is it resistance to modern development practices. It is the practice of experienced engineers using AI intentionally as an accelerator for engineering work instead of a replacement for engineering judgment. The engineer still owns the architecture, security model, operational behavior, code quality, and final implementation decisions.

The Problem with “It Works”

One of the biggest risks with AI-assisted development is that it creates the illusion of an engineering process. If the only metric is whether an application compiles, renders a page successfully, or returns the expected API response, insecure systems can appear successful right up through deployment, harboring latent flaws that only surface under real-world conditions.

Secure software engineering has never been about simply producing output. Engineers must understand where trust boundaries exist, how authentication and authorization decisions are enforced, how systems interact under failure conditions, and what operational exposure may exist beneath the surface of a working application.

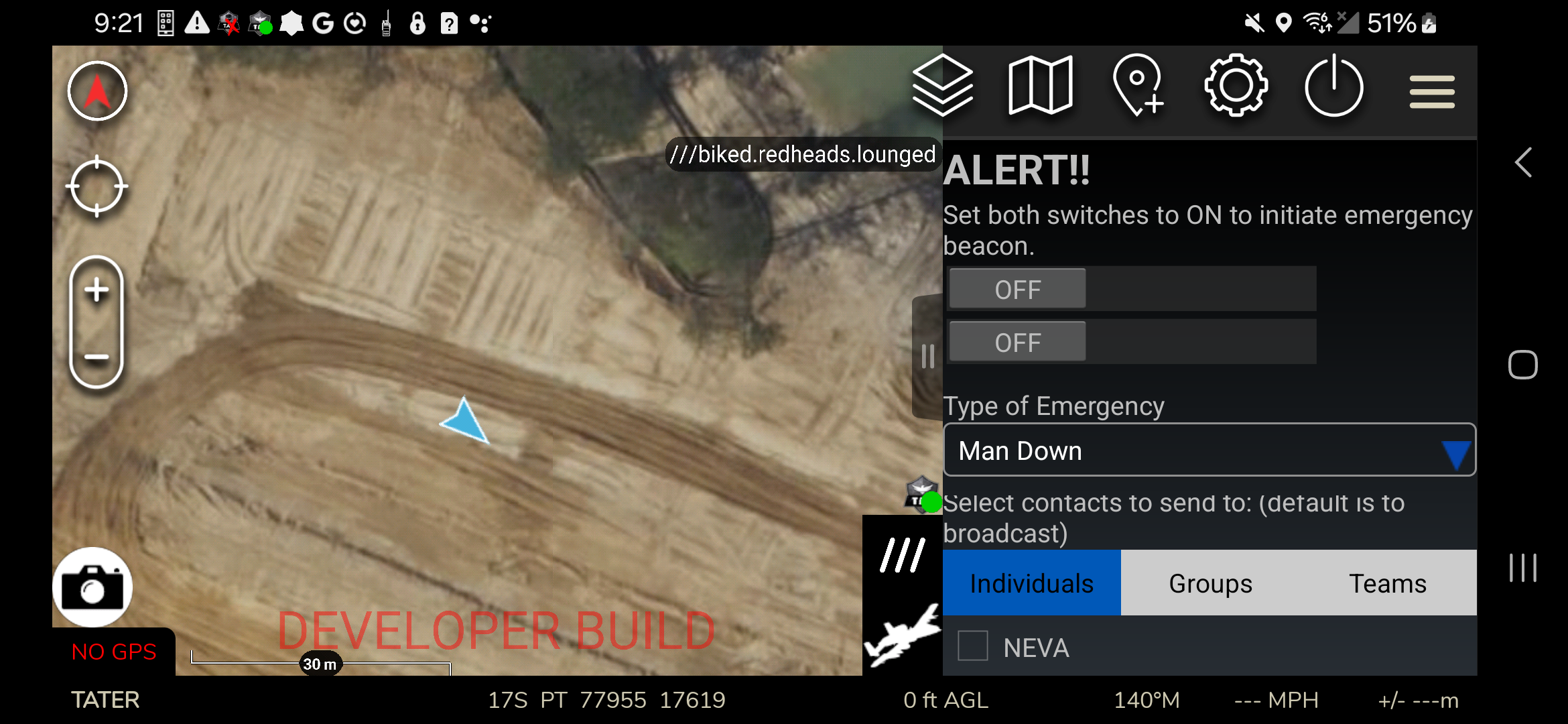

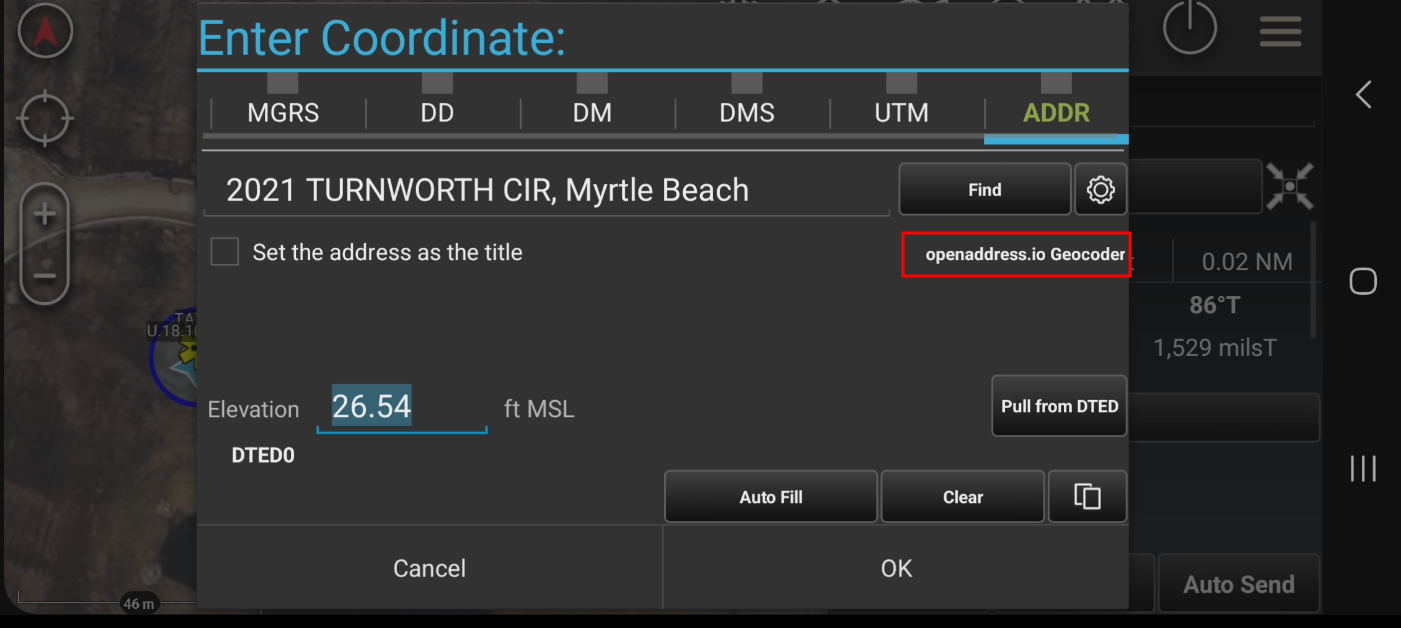

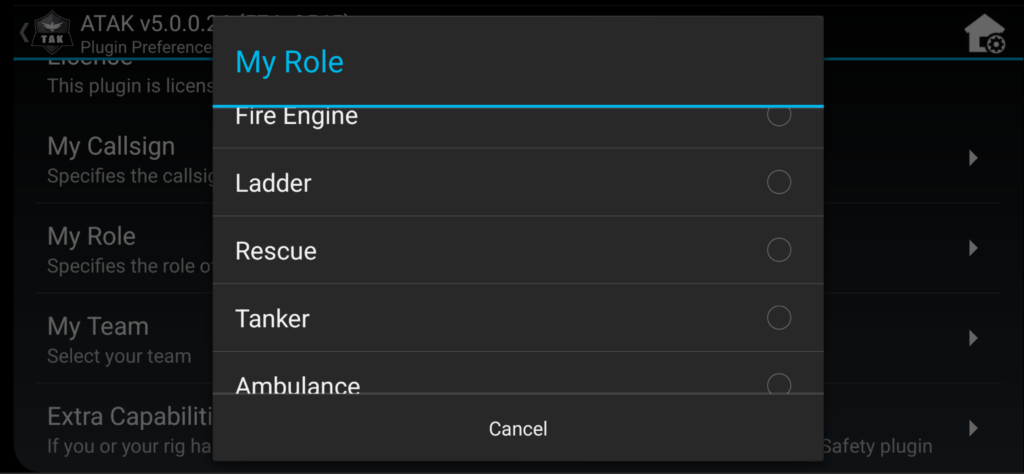

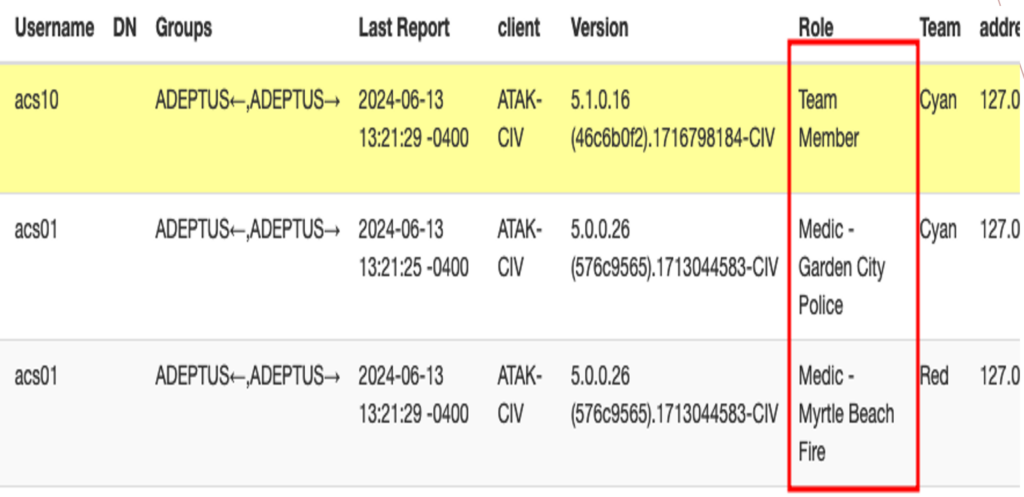

This becomes especially important in TAK ecosystems and other mission-critical operational environments where applications routinely integrate with drones, sensor platforms, mapping systems, video feeds, and external operational data sources. In those environments, poor architectural decisions are not isolated to a single application. They can impact data integrity, operational visibility, and trust across interconnected systems.

A customer-facing application that aggregates information from multiple backend services is not simply a front-end development problem. It is also a security, infrastructure, data ownership, and operational risk problem. AI can generate the code required to connect those systems together very quickly. What it cannot do independently is understand the operational consequences of those integrations. That responsibility still belongs to engineers.

Security Is an Architecture Problem First

One of the most dangerous patterns emerging in AI-assisted development is teams skipping architecture discussions, design reviews, and proper code reviews because AI tools make implementation feel inexpensive and fast. Teams convince themselves they can solve problems reactively as they appear rather than proactively designing systems correctly from the beginning.

This pattern predates AI-assisted development by years. Long before AI entered the workflow, teams were already bypassing architecture reviews, minimizing code reviews, and sacrificing long-term maintainability in the name of short-term velocity. AI has simply accelerated a behavior that already existed in many organizations, particularly those operating under aggressive deadlines and unrealistic delivery expectations.

As a result, development turns into a continuous cycle of generating code, testing functionality, patching defects, and repeating the process. Teams initially believe they are moving faster because features are appearing quickly, but unresolved technical debt quietly compounds beneath the surface. Every new feature introduces regressions somewhere else. Security gaps emerge in unexpected places because the original architecture never clearly defined trust boundaries, ownership responsibilities, or operational behavior between systems.

At some point, engineering velocity collapses entirely because the team is no longer building software intentionally. They are simply reacting to the consequences of earlier shortcuts.

A Real-World Example

Recently, I worked with an organization that hired what appeared on paper to be a highly qualified senior software engineer with significant AI development experience. The engineer was tasked with leading an internal project designed to aggregate data from multiple systems across the organization and expose portions of that information through a customer-facing application.

Initially, the project appeared to move very quickly. Features were implemented rapidly, integrations came together fast, and management saw visible progress. From the outside, the project looked successful.

However, significant architectural and security problems were developing beneath the surface. The engineer did not fully understand the underlying data models or how the information needed to be abstracted safely for customer use. Instead of designing clear trust boundaries and well-defined security controls, the application evolved organically through AI-assisted iteration, with security treated as a secondary concern rather than a foundational requirement.

Over time, internal resources began appearing beneath the customer-facing application in ways they should never have been exposed. Those details were not immediately visible to end users, but they were visible through server logs, proxy behavior, and infrastructure telemetry. The situation deteriorated further when production code was pushed multiple times without meaningful human review, and developers lost confidence not only in the implementation itself, but in the overall engineering process surrounding the project.

Importantly, the root problem was not AI. The real problem was the absence of systems thinking, architecture discipline, security ownership, and engineering accountability.

What This Actually Looks Like in Code

The story above describes what went wrong at an organizational level. But it helps to see what these failures look like at the code level, because that is where the vulnerability actually lives and where engineers have the power to stop it.

Consider a common scenario: a backend API that serves data to a customer-facing application. The application needs to return order information for the currently logged-in user. A developer prompts an AI tool to generate the endpoint, the AI produces working code quickly, the tests pass, and the feature ships. Here is a simplified version of what that generated endpoint might look like:

// Vibe-coded endpoint — passes every functional test, ships to production

app.get('/api/orders', async (req, res) => {

const { userId } = req.query;

const orders = await db.query(

'SELECT * FROM orders WHERE user_id = ' + userId

);

res.json(orders);

});This code works. The page loads. The data appears. Management sees a completed feature. But there are three serious vulnerabilities sitting in eight lines of code, and none of them would be caught by a functional test.

First, the query is constructed by concatenating a user-supplied value directly into a SQL string. This is a textbook SQL injection vulnerability. An attacker who passes 1 OR 1=1 as the userId will receive every order record in the database, not just their own. Second, the userId is being pulled from the query string, which means any authenticated user can request any other user’s orders simply by changing a number in the URL. There is no check confirming that the requesting user actually owns the records being returned. Third, SELECT * returns every column in the orders table including fields that may contain internal pricing data, supplier identifiers, fulfillment system references, or other backend details that were never intended for customer exposure.

An agentic engineer approaching the same requirement would produce something fundamentally different:

// Agentic engineering approach — authorization enforced, injection eliminated,

// response scoped to what the customer is actually allowed to see

app.get('/api/orders', authenticate, async (req, res) => {

const requestingUserId = req.user.id; // identity from verified session token, not user input

const orders = await db.query(

'SELECT order_id, status, total, created_at FROM orders WHERE user_id = $1',

[requestingUserId] // parameterized query — SQL injection is not possible

);

res.json(orders);

});The differences are deliberate and meaningful. The user identity comes from a verified session token on the server side, not from anything the client can manipulate. The query is parameterized, which eliminates the entire class of SQL injection attacks regardless of what input is provided. The SELECT statement names only the columns the customer is permitted to see, so internal fields never leave the database layer. And the authenticate middleware ensures the endpoint cannot be reached at all without a valid session.

The agentic engineer could absolutely have used AI to help write this code. The difference is that they understood what the code needed to enforce before they asked for it, and they reviewed what came back with enough domain knowledge to recognize what was missing. The prompt is not the engineering. The judgment is.

This particular pattern , pulling identity from user-controlled input instead of a verified server-side session is one of the most common vulnerabilities introduced by AI-generated API code. It is worth making a note of. If you are reviewing AI-generated backend endpoints, it is one of the first things to check.

Agentic Engineering Is Different

AI itself is not the enemy of secure software development. In fact, when used correctly, it can significantly improve engineering efficiency and even strengthen security outcomes. Experienced engineers can use AI to accelerate repetitive development tasks, improve documentation, generate test scaffolding, assist with DevSecOps workflows, analyze code paths, and rapidly prototype integrations. Used responsibly, these tools reduce operational friction and allow teams to focus more energy on architecture, security, and system reliability.

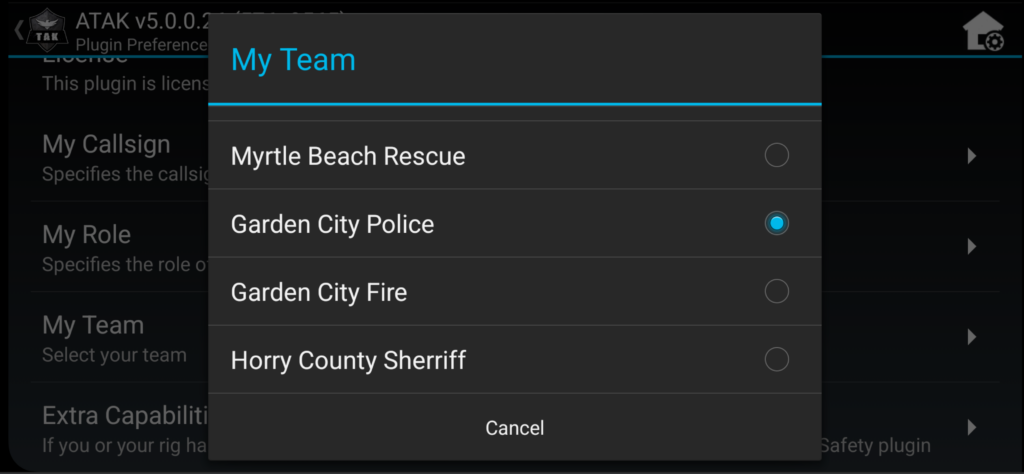

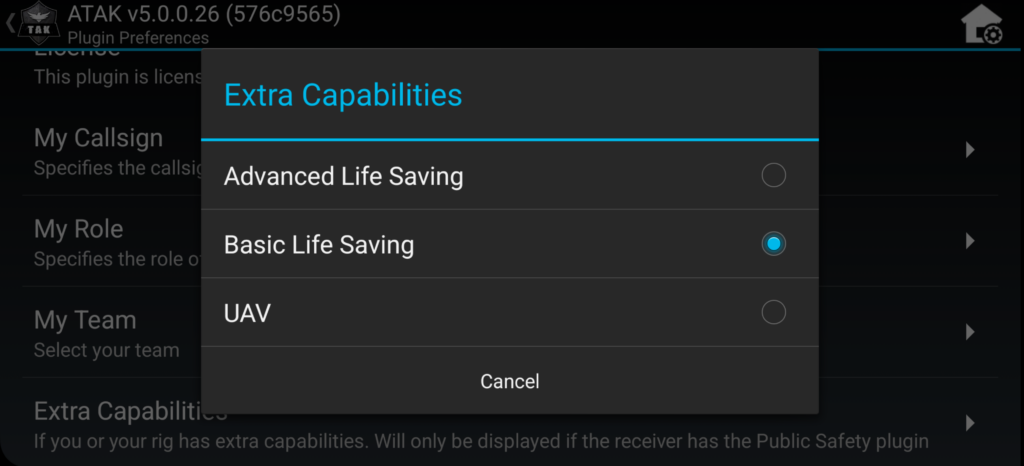

One of the areas where AI still falls short is domain expertise. Modern AI tools can generate large amounts of functional code very quickly, but they do not inherently understand the operational environment the software is being built for. They do not understand organizational workflows, mission priorities, regulatory constraints, data sensitivity, or how users actually interact with systems in the real world. That gap becomes especially significant in cybersecurity, TAK, and other operational technology environments where software decisions carry consequences beyond the application itself.

Without that domain expertise, teams often end up generating technically functional solutions that fail operationally, architecturally, or from a security standpoint, because the underlying assumptions were never fully understood in the first place. Agentic engineers close that gap. They understand the system before generating code, validate assumptions instead of blindly accepting generated output, and review AI-generated implementations critically with full awareness of the operational and security implications.

This is particularly important in cybersecurity and TAK-related environments where software defects are not simply bugs. In many cases, they become operational risks that can impact mission effectiveness, situational awareness, data integrity, or service availability.

Secure Systems Still Require Human Review

One of the most concerning trends emerging from aggressive AI-assisted development is the erosion of code review culture. Many organizations are now merging AI-generated code at a pace that outstrips meaningful peer review, especially when leadership prioritizes development velocity above all else.

Code reviews exist for far more than catching syntax mistakes or broken logic. They validate architectural consistency, security assumptions, operational impact, maintainability, and unintended side effects that automated tooling may never correctly identify. In mature engineering organizations, code reviews also create accountability. They force engineers to explain design decisions, justify security assumptions, and ensure changes align with broader architectural goals.

Static analysis tools and AI scanners provide real value, but they cannot holistically evaluate operational risk the way experienced engineers can. AI can generate insecure code just as quickly as it generates functional code. Without disciplined review processes, organizations risk deploying vulnerabilities at machine speed.

Conclusion

AI-assisted development is not going away, nor should it. The productivity gains are real, and engineers who refuse to learn these tools will eventually struggle to remain competitive. The organizations that succeed long term, however, will not be the ones blindly automating software development. They will be the organizations that combine AI acceleration with strong architecture practices, security-first engineering, DevSecOps discipline, and experienced technical leadership.

At Adeptus Cyber Solutions, we use AI-assisted development tools across cybersecurity, TAK, and software engineering engagements because they provide real value when applied correctly. We also recognize that secure systems still require experienced engineers who understand operational security, architecture, infrastructure interactions, and mission impact. Our approach has always centered on disciplined engineering practices, because that is what secure operational systems demand.

Whether taking on a development effort internally or hiring out the work, remember this: AI can accelerate software development dramatically. Prompting can generate software.

Engineering is what makes it secure.